Coming from a video games background, the world of fashion appears otherworldly. A place full of lurid colours and fantastical designs springing off the pages of glossy magazines and posters. It often makes games look monochrome and unimaginative by comparison.

But the fashion world is also distant and disconnected from games. So when the chance came up to attend a Digital Fashion workshop in Prague at the start of the summer, I was intrigued. Could technology provide a bridge between these worlds? How was technology changing and being used in this different, but highly creative industry? Here’s what I found.

Hardcore Tech

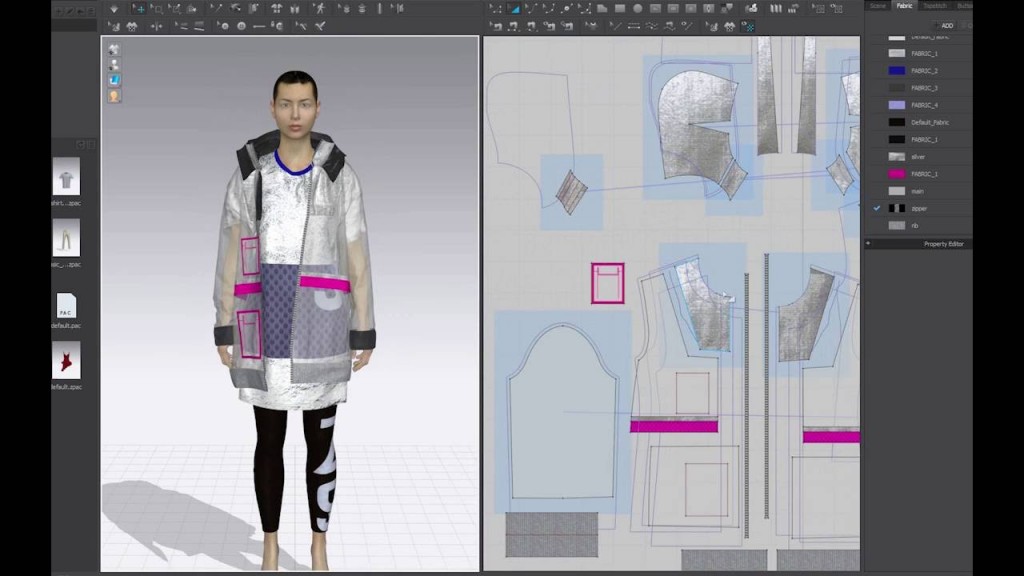

Soon after the workshop started, I was introduced to a program called Clo3D, which allows fashion designers to create digital garments. On the one hand, it lets designers sketch out the traditional 2D patterns (templates) used to make and manufacture clothes. But, it’s real power lies in then giving preview of how those clothes will look in 3D, as worn by an avatar. Clo3D effectively simulates the folds and drapes of the cloth in real-time, meaning designers can see how their creations will look and move around on the wearer, before ever needing to cut and stitch a single piece of real material. It’s even possible to design garments in the 3D preview view, and then convert back to 2D patterns, for printing and making the clothes for real.

As a programmer, Clo3D impressed me a lot. It produces professional industry quality results whilst solving some very difficult maths and programming problems. Simulating different types of cloth / fabric in real time in a convincing way is definitely at the hardcore end of algorithm design and optimisation.

Hacking Concepts

I could see all these creative people making cool garments in Clo3D and similar programs. But then all they could do with them, it seemed, was just make a rendering of the clothes. Or export a static 3D model. That was no good if we ever wanted to arrive at our cyberpunk future of wearing digital clothes.

Coming from the technology side of things, I approached the workshop a bit like a hackathon or game jam. I wanted to produce something tangible, or at least try to make something and see if I could learn from it. Explore the space where fashion and technology intersect.

Since the theme of the workshop was “wearing pixels”, I set that as my goal. To see if by the end of the weekend, I could allow someone to “wear” a digital garment. To do this, there would be three parts to my plan:

1). Getting clothes others had designed into a general purpose package – in my case Unity3d, since that’s what I’m most familiar with.

2). Visualising the clothes on a person – To wear pixels, the wearer and others around them need to see the pixels somehow.

3). Giving the clothes life – To really feel like they were being worn, they should react and move in response to the wearer’s movements.

My original plan was to have the wearer see their digital clothes by way of an AR headset. When they looked down at themselves, they would see the digital garments superimposed on their body. (With the view of what the wearer was seeing duplicated on a monitor or TV for spectators). When the wearer moved their body, a Kinect (v2) sensor would track that movement and update the clothes to move correspondingly.

I figured out the easiest way to do this would be to make a “multiplayer game” in Unity. In this game, Player 1 would be a laptop + Kinect pointed at the wearer. The Kinect would track the wearer’s movements and use that to drive the movements of Player 1’s avatar in the virtual game world.

Player 2 would be the AR headset of the wearer, displaying the virtual game world superimposed over reality. In this way, the wearer would see Player 1’s virtual avatar moving around the world, copying their movements.

For the clothes, they would first be exported from Clo3D or wherever as fbx or obj models. Then physics added back on to them by applying Unity cloth physics components to the models. And finally placed onto Player 1’s avatar, so that when the avatar moved, so would the clothes.

Getting the wearer to stand a known distance from the Kinect, plus a bit of maths, would ensure the virtual world and real world lined up: Player 1’s avatar would appear over the top of the wearer’s own body. Finally, making the avatar, but not its clothes, invisible, and the wearer would see just the digital clothes being worn by them, moving when they move.

Purple Tai Chi Flags

Given the workshop was only 2 and a half days, I was very proud that I managed to mostly execute on the plan! I mashed up the demo project of off-the-shelf multiplayer game engine Photon, and amazingly, it played nicely with both the Kinect and the AR headset.

I ran out of time to get a proper 3D model moving with the Kinect, leaving the avatar as just the Kinect’s debug “skeleton” lines between the different joints and body parts. And I never got round to doing the maths for getting Player 1’s avatar to line up with the wearer’s body properly.

The result was a skeleton of purple lines and grey cubes, that the wearer could control with the Kinect, and see a short way off as an AR hologram in their headset. After getting various other workshop participants to try out the contraption, people seemed to actually prefer having the avatar not directly on themselves, treating it like looking at oneself in the mirror.

The network connection to the Photon multiplayer servers was also quite slow. The wearer would move one of their limbs, and their avatar would respond about half a second later. This may have been because of the crappy wifi connection at the workshop, or because I was using the free trial version of Photon. But either way, it helped slow down the whole experience and encouraged wearers to do more Tai Chi style moves, and less frantic disco dancing.

As well, the digital clothes themselves proved both a failure, and a happy accident. As a test, I made a simple plane (square) of Unity cloth physics, and attached it under the arm of the Kinect skeleton avatar. Once the wearer started moving their arms around, this square acted a bit like a flag, or the muleta (red cloth) a matador uses during a bullfight. It proved much more interesting to wave around than the “proper” garment I added: A simple Clo3D-made t-shirt I had placed around the core of the wearer’s body. Unlike the flowing plain square, the t-shirt jiggled around glitchily at best, and at worst, scrunched up into an unrecognisable jumble of pixels.

Quality That Never Came

After the workshop, I spent some further weekends trying to build on what I’d made. Without the time limits, I managed to get a male and female avatar to move around controlled by the Kinect. I made some clothes of my own in Clo3D, specifically designed so that importing them into Unity3D and adding cloth physics would be easy. And I spent ages tinkering with capsule and sphere colliders in Unity, trying to get my clothes to roughly stay on my avatars and not clip through them too much.

However, for all my extra efforts, the results never looked more than proof of concept. Usually with games, you can find some good smoke and mirrors tricks for making things look presentable. Or you can see that with the right application of skilled artists and programmers, the project will eventually reach a good level of visual quality. This was not the case for my wearing pixels project, and that proved quite disheartening. Our cyberpunk future is still some way off, at least for bedroom coders like me.

Photoshopping Fashion

This forced me to take a step back and re-think my approach. In the course of the workshop, we shared and discussed the work of many different people and projects that fell under the broad “digital fashion” umbrella.

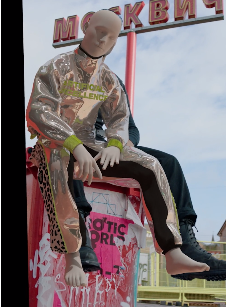

In particular, I was very taken by the Digital Collection by Scandinavian fashion store Carlings. They designed a line of digital clothes in Clo3D (or whatever other program). But rather than print out the designs and make them for real, they instead got customers to pick one of the digital garments, and send in photos of themselves striking a pose. The designers would dress an avatar in Clo3D in the digital clothes the customer had bought. And match avatar’s pose to the pose of the customer in the photo. Next they took a screenshot/render, and cropped out the avatar and background, leaving just the clothes. Lastly, they pasted the clothes onto the customer’s photo. The customer got back in return the photo of them now magically wearing the digital clothes.

Initially, I thought this was a bit of a cheap trick. Aside from designing the clothes themselves, it was essentially something so simple, even I could do it with my rudimentary photoshopping skills. I started to think up how this could be automated. Using machine learning to detect poses in the photos sent by customers. Having a script to control the camera and avatar posing in Clo3D. Take a screenshot and use edge detection algorithms or again, machine learning to pick the clothes out of the screenshot. Apply some light feathering, paste onto the original photo. Voila!

It was only after struggling with my Kinect experiments that I realised I was missing the point. The Carlings Digital Collection was simple and effective.

Wear Next?

That’s not to say there aren’t others out there trying to solve the difficult technical problems I struggled with. However, they tend to have VC money or a PhD in Mathematics. Or both, which I don’t.

Moving forward, I want to make a “fashion game”, using the skills I’ve already honed, plus the new knowledge and connections I made at the workshop. Of course that raises the question of what a “fashion game” is, but that’s a fun question to answer for a future blog.

I really enjoyed reading About your journey, i would love to see your try Outs from after the Workshop as well, if youd like to share.

And im super excited About the Fashion game of Course!

See you very soon!!

Paula